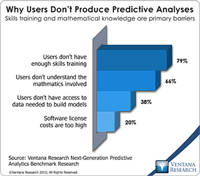

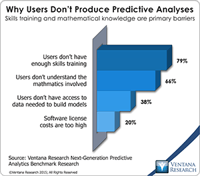

Predictive analytics is a rewarding yet challenging subject. In our benchmark research on next-generation predictive analytics at least half the participants reported that predictive analytics allows them to achieve competitive advantage (57%) and create new revenue opportunities (50%). Yet even more participants said that users of predictive analytics don’t have enough skills training to produce their own analyses (79%) and don’t understand the mathematics involved (66%). (In the term...

Read More

Topics:

Data Science,

Business Analytics,

Business Intelligence,

Business Performance,

Cloud Computing,

Uncategorized

Qlik helped pioneer the visual discovery market with its QlikView product. In some respects, Qlik and its competitors also spawned the self-service trend rippling through the analytics market today. Their aim was to enable business users to perform analytics for themselves rather than building a product with the perfect set of features for IT. After establishing success with end users the company began to address more of the concerns of IT, eventually creating a robust enterprise-grade...

Read More

Topics:

Analytics,

Business Analytics,

Business Collaboration,

Business Intelligence,

Cloud Computing,

Data Preparation,

Information Management,

Uncategorized,

Visualization,

Qlik,

Qlik Sense

It has been more than five years since James Dixon of Pentaho coined the term “data lake.” His original post suggests, “If you think of a data mart as a store of bottled water – cleansed and packaged and structured for easy consumption – the data lake is a large body of water in a more natural state.” The analogy is a simple one, but in my experience talking with many end users there is still mystery surrounding the concept. In this post I’d like to clarify what a data lake is, review the...

Read More

Topics:

Big Data,

Data Science,

Predictive Analytics,

Social Media,

Business Analytics,

Business Intelligence,

Data Governance,

Data Lake,

Governance, Risk & Compliance (GRC),

Information Management,

Uncategorized,

Strata+Hadoop

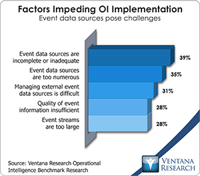

The emerging Internet of Things (IoT) is an extension of digital connectivity to devices and sensors in homes, businesses, vehicles and potentially almost anywhere. This innovation means that virtually any device can generate and transmit data about its operations – data to which analytics can be applied to facilitate monitoring and a range of automatic functions. To do these tasks IoT requires what Ventana Research calls operational intelligence (OI), a discipline that has evolved from the...

Read More

Topics:

Real-time,

Supply Chain Performance,

IOT,

Operational Intelligence,

Uncategorized

On Monday, March 21, Informatica, a vendor of information management software, announced Big Data Management version 10.1. My colleague Mark Smith covered the introduction of v. 10.0 late last year, along with Informatica’s expansion from data integration to broader data management. Informatica’s Big Data Management 10.1 release offers new capabilities, including for the hot topic of self-service data preparation for Hadoop, which Informatica is calling Intelligent Data Lake. The term “data...

Read More

Topics:

Big Data,

Business Intelligence,

Data Governance,

Data Preparation,

Informatica,

Information Applications,

Information Management,

Uncategorized,

Strata+Hadoop

I recently attended the SAS Analyst Summit in Steamboat Springs, Colo. (Twitter Hashtag #SASSB) The event offers an occasion for the company to discuss its direction and to assess its strengths and potential weaknesses. SAS is privately held, so customers and prospects cannot subject its performance to the same level of scrutiny as public companies, and thus events like this one provide a valuable source of additional information.

Read More

Topics:

Big Data,

Predictive Analytics,

SAS,

Analytics,

Business Analytics,

Business Collaboration,

Business Intelligence,

Business Performance,

Information Applications,

Information Management,

Uncategorized,

Visualization

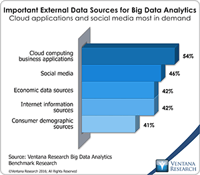

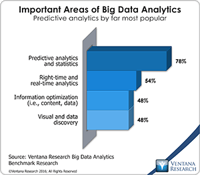

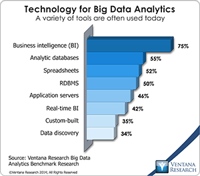

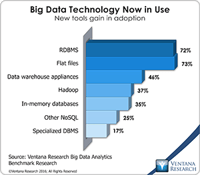

The big data market continues to expand and enable new types of analyses, new business models and new revenues streams for organizations that implement these capabilities. Following our previous research into big data and information optimization, we’ll investigate the technology trends affecting both of these domains as part of our 2016 research agenda.

Read More

Topics:

Analytics,

Business Analytics,

Business Intelligence,

Data Preparation,

In-memory,

Information Management,

Operational Intelligence,

Uncategorized

Some followers of Ventana Research may recall my work here several years ago. Here and elsewhere I have spent most of my career in the data and analytics markets matching user requirements with technologies to meet those needs. I’m happy to be returning to Ventana Research to resume investigating ways in which organizations can make the most of their data to improve their business processes; for a first look, please see our 2016 research agenda on Big Data and Information Optimization. I relish...

Read More

Topics:

Big Data,

Predictive Analytics,

Analytics,

Business Analytics,

Business Intelligence,

Information Management,

Internet of Things,

Operational Intelligence,

Uncategorized,

Unicorns,

IT Performance Management (ITPM)

Last week I attended salesforce.com’s Dreamforce user conference in San Francisco (Twitter #DF10). As a user of salesforce applications for the last four years in my previous positions, I was familiar with its analytic capabilities, or lack thereof. Certainly you can accomplish simple reporting and produce dashboards displaying salesforce data, which is adequate for narrowly focused reporting and analysis. However, as a user I was underwhelmed. For example, there are no built-in capabilities...

Read More

Topics:

Sales,

Salesforce.com,

Analytics,

Cloud Computing,

Customer Service,

Uncategorized