Big data has become an integral part of information management. Nearly all organizations have some need to access big data sources and produce actionable information for decision-makers. Recognizing this connection, we merged these two topics when we put together our recently published research agendas for 2017. As we plan our research, we focus on current technologies and how they can be used to improve an organization’s performance. We then share those results with our readers.

Our research agenda for big data and information management begins with data preparation. We recently launched a benchmark research study on the topic and invite you to participate in it. End users today expect to be able to access data sources directly without support from IT. Data preparation attempts to solve that problem by making data more accessible throughout the information life cycle, in the end adding value to business and IT processes. In our research, we’ll explore how organizations establish data preparation to provide information responsiveness while also supporting enterprise requirements for data quality, governance and security.

The larger context of data preparation is the overall process of data integration. Data sources are larger, more diverse and more distributed, found in cloud-based systems, unstructured data and connected devices generating data throughout the enterprise. Data lakes and data virtualization are two ways in which organizations can integrate and provide access to these diverse data sources. We’ll look how organizations utilize data lakes, including whether they are using data virtualization, for comprehensive data sets that support both analytics and data governance.

In a related area, organizations face challenges when new data types and sources have been adopted without sufficient data governance. The open source ecosystem surrounding big data, which I have written about, necessitates the creation of new governance capabilities, which has spawned new open source projects and has attracted independent software vendors (ISVs) to fill in gaps with new governance tools. Our research will identify best practices for developing and implementing a comprehensive data governance strategy for all types of data, both new and old.

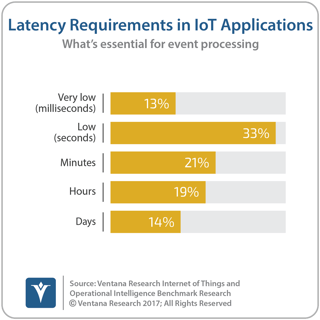

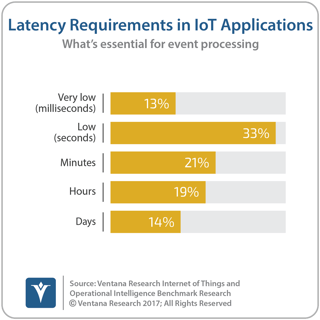

The Internet of Things, which includes an increasing number of devices that are instrumented and connected, drives a new set of requirements in addition to governance. IoT data is generated constantly, increasing demands for streaming data technologies combined with data science to enable continuous analytics. Our recent IoT benchmark research shows that nearly half (46%) of organizations [IoT Q25] consider it essential to process IoT events in seconds or subseconds. We have further research planned to complement our IoT research by studying how organizations are dealing with streams of machine data and IoT data. We’ll also be diving deeper into the IoT research we have completed to share more of our findings and their implications for organizations of all sorts.

number of devices that are instrumented and connected, drives a new set of requirements in addition to governance. IoT data is generated constantly, increasing demands for streaming data technologies combined with data science to enable continuous analytics. Our recent IoT benchmark research shows that nearly half (46%) of organizations [IoT Q25] consider it essential to process IoT events in seconds or subseconds. We have further research planned to complement our IoT research by studying how organizations are dealing with streams of machine data and IoT data. We’ll also be diving deeper into the IoT research we have completed to share more of our findings and their implications for organizations of all sorts.

As organizations increase their arrays of big data sources, including streams of IoT data, the importance of data science also increases. Traditional analytical tools and techniques are not effective at identifying all the valuable relationships in large bodies of data, nor are people effective at real-time processing. Data science helps tackle these issues. However, as our Next-Generation Predictive Analytics research shows, most organizations suffer from a lack of skilled data science resources. More than three-quarters (79%) of participants reported that users don’t have enough skills to perform their own data science analyses.

Machine learning provides a potential solution to this skills shortage, at least in part. Through self-learning algorithms, the development and refinement of data science routines become more automated, requiring fewer resources to develop models that predict and optimize outcomes. Such automation is critical in the areas of big data and IoT. Manual processes simply cannot support the volumes of data and the continuous analysis required. We’ll take a close look at how machine learning impacts organizations as part of our 2017 agenda.

At the core of all these issues, information management requirements continue to grow. Both IT and lines of business need to be able to manage large volumes of structured and unstructured data, often streaming on a continuous basis. Big data technologies continue to proliferate to support streaming, at-rest and in-memory data. We will be studying how organizations deal with this proliferation of technologies to extract business value from the data that they gather.

For the full list of research planned, please download and review our Big Data and Information Management research agenda. I invite you to participate in this research as we conduct it during the year. I look forward to sharing the insights we discover and to helping your organization apply those insights to its business needs.

Regards,

David Menninger

SVP & Research Director

Follow Me on Twitter @dmenningerVR and Connect with me on LinkedIn.